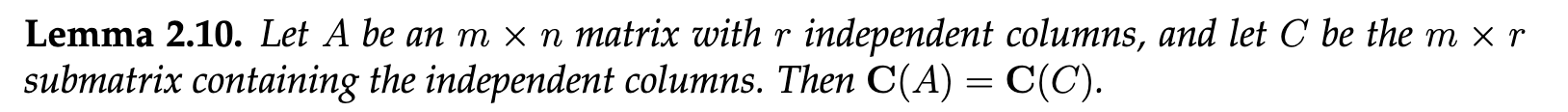

Column Space and Reordering Columns

Reordering columns does not change the rank of a matrix. The independent columns of a matrix span its column space.

Proof

Let represent the independent columns of a matrix , and let represent the dependent columns (ordered as in ). The goal is to show that the column space of remains unchanged even if the columns are reordered.

For each , is a linear combination of , since this sequence includes all the previous independent columns. This implies that:

Now, let’s consider the span of the independent columns: . By successively adding the dependent columns , the resulting span remains the same:

This shows that reordering columns does not alter the span of the matrix’s column space, thereby proving the column space remains unchanged.

Transpose of a Matrix

The transpose of a matrix is obtained by flipping the matrix over its diagonal, swapping rows with columns. For example, for a matrix , its transpose is denoted by . A useful property is that taking the transpose twice returns the original matrix:

Symmetric Matrices

A matrix is symmetric if it equals its transpose, i.e., . This holds for certain special matrices, such as real-valued square matrices with symmetric entries across the diagonal.

Additionally, the column space of a matrix is the row space of its transpose . This shows a dual relationship between row and column spaces.

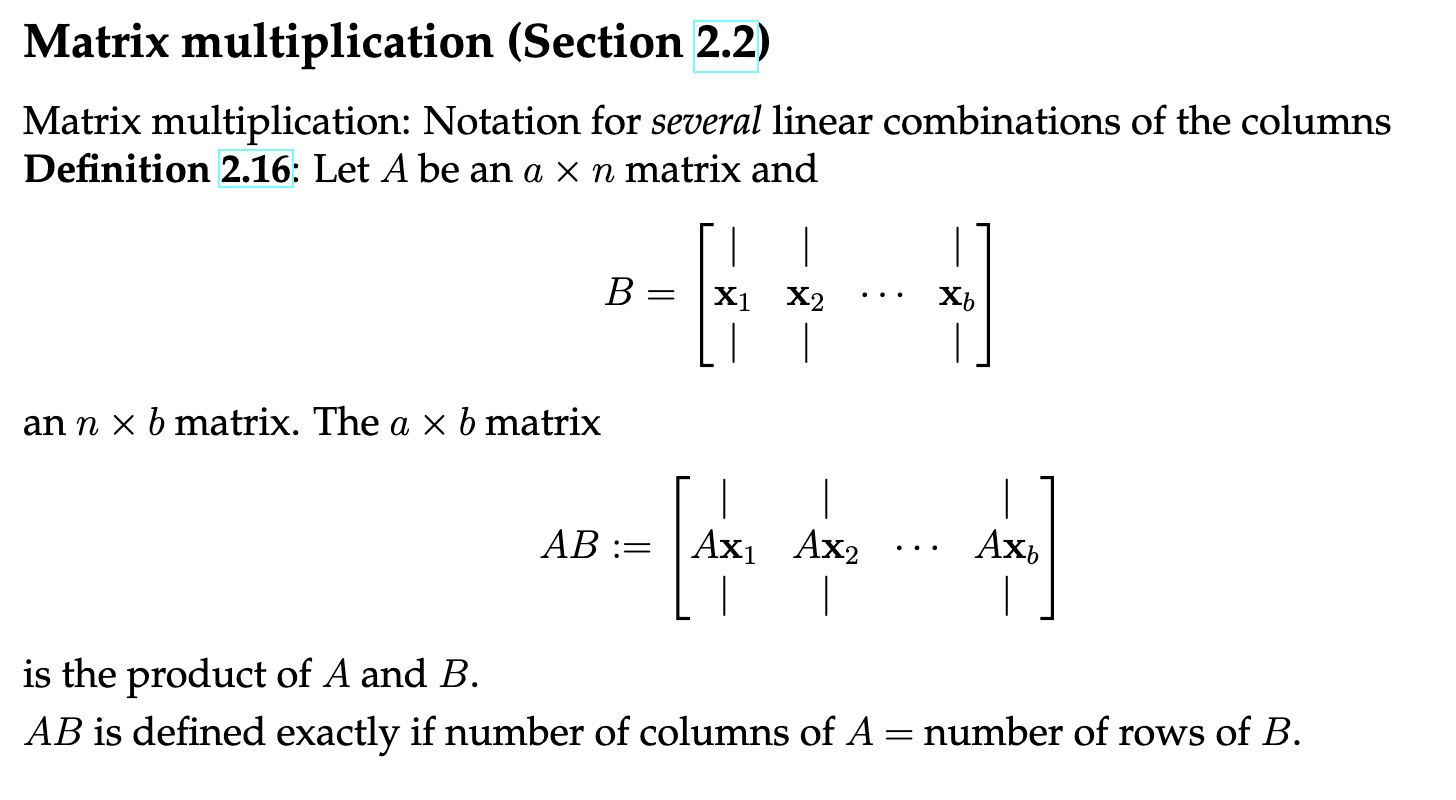

Matrix Multiplication

Matrix multiplication can be understood as performing multiple linear transformations in sequence. Intuitively, applying matrix first, followed by matrix , is not the same as applying first and then . Hence, in general, matrix multiplication is not commutative:

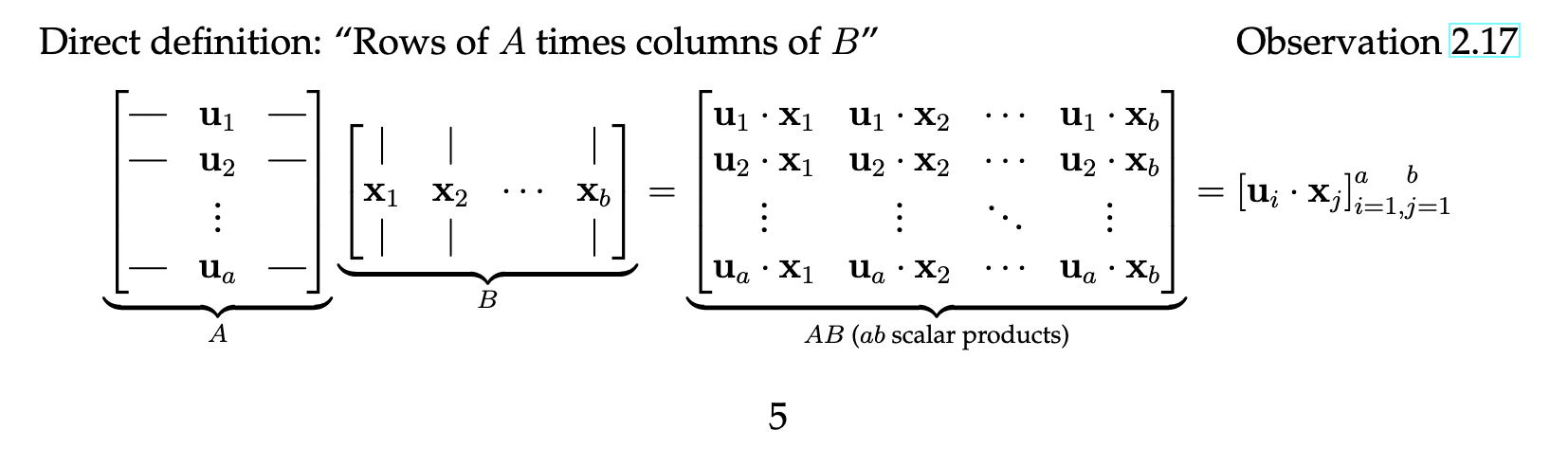

Matrix Multiplication Visualization

Matrix multiplication can be performed row by row, or column by column. For two matrices (of size ) and (of size ), the entry in row and column of the product matrix is the dot product of the -th row of with the -th column of .

This shows the basic mechanism of matrix multiplication.

Commutativity and the Transpose of a Product

While , an important property of matrix transposition is that the transpose of a product reverses the order of multiplication:

Inner Product vs. Outer Product

The inner product involves multiplying corresponding elements of two vectors and summing the result. The outer product, on the other hand, multiplies each element of a column vector by each element of a row vector, resulting in a matrix.

Lemma 2.21 (Rank 1 Matrices)

Let be an matrix. The following statements are equivalent:

- There exist vectors and , with and , such that:

This shows that a rank-1 matrix can be expressed as the outer product of two vectors.

Distributive and Associative Properties of Matrix Multiplication

Matrix multiplication follows the distributive property:

However, matrix multiplication is generally not commutative, meaning that in most cases. This reflects the fact that applying transformations in a different order results in different outcomes.

The associative property, on the other hand, holds for matrix multiplication:

This property shows that it doesn’t matter how we group the matrices when multiplying three or more together, as long as the order of multiplication is preserved.

Generally, these properties require separate proofs depending on the dimensions and properties of the matrices involved.